Since 2008, over a hundred billion apps have been downloaded from Apple’s App Store onto users’ iPhones or iPads. Thousands of software developers have written these apps for Apple’s “iOS” mobile platform. However, the technology and tools powering the mobile “app revolution” are not themselves new, but rather have a long history spanning over thirty years, one which connects back to not only NeXT, the company Steve Jobs started in 1985, but to the beginnings of software engineering and object-oriented programming in the late 1960s.

The NeXT Cube (1990) was a masterpiece of engineering… but too expensive. NeXT evolved into a software company after the Cube and several other NeXT hardware products failed in the marketplace. NeXT’s greatest innovation was the NeXTSTEP operating environment. CHM# 102626734

Apple’s iOS is based on its desktop operating system, Mac OS X. More importantly, iOS’s software development kit (SDK), known as “Cocoa Touch,” is based on the same principles and foundations as Mac OS X’s desktop SDK, Cocoa. (An SDK is the set of tools and software libraries that application developers use to build their apps. Commonly these come in the form of Application Program Interfaces, or “APIs,” which are interfaces or “calls” into functions provided by the platform’s built-in libraries.) OS X and Cocoa, which first shipped in March 2001, were in turn based on the NeXTSTEP (originally capitalized as “NeXTStep”) operating and development environment. NeXT was founded by Steve Jobs upon resigning from Apple after he had been stripped of power following an attempted boardroom coup. Both NeXTSTEP and NeXT’s computers were state of the art, but the computers were too expensive for the education market NeXT targeted.

Its hardware business flagging, by 1993 NeXT was forced to close down its factory, becoming a software company focused on custom applications development for the enterprise. The NeXTSTEP development platform, renamed “OpenStep,” was ported to other hardware and other operating systems, including Intel processors and Sun workstations.

In 1996, Apple was itself in dire straits, and needed to replace its aging Mac OS with a more modern and robust operating system. Failing to produce one of its own, Apple acquired NeXT in order to make NeXTSTEP the basis for what eventually became Mac OS X. In January 1997, at the annual Macworld Expo trade show, Steve Jobs triumphantly returned onstage as an Apple employee for the first time since 1985, this time to explain what he thought Apple needed to survive and become great again, and how NeXTSTEP technology could help Apple achieve it.

Video: Jobs demonstrates OpenStep, MacWorld Expo, January 1997

In this short 20-minute presentation at MacWorld Expo in January 1997, Jobs demonstrated the technology that would become Cocoa, the software development system that would eventually be used by thousands of iOS app developers around the world. Steve Jobs was showing Apple developers what their future would look like, one which, indeed, today’s iOS developers would find remarkably similar to their everyday experience. In fact, what Jobs was showing Apple developers in 1997 was not new, but had been released by NeXT almost a decade earlier, in 1988.

Video: Steve Jobs introduces the NeXTStep object-oriented development environment to the world

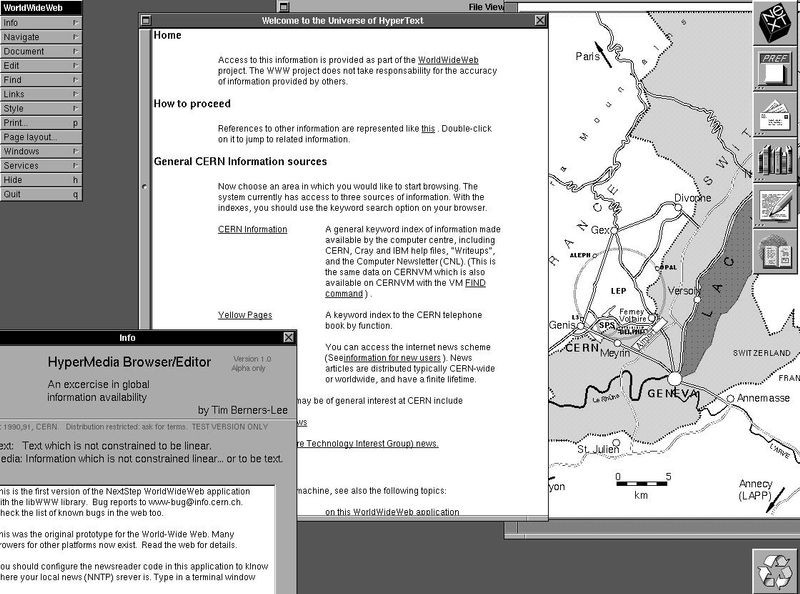

Indeed, NeXTSTEP had been such a productive development environment that in 1989, just a year after the NeXT Computer was revealed, Sir Tim Berners-Lee at CERN used it to create the WorldWideWeb.

Tim Berners-Lee’s first web browser/editor, running on NeXTSTEP

What made NeXT’s development environment so ahead of its time? At the 1997 MacWorld demo, Jobs told a little parable . By that point in time, it was well known in the computer industry that Jobs got the idea for the Macintosh’s graphical user interface when he and a team from Apple visited Xerox PARC in 1979. PARC, or “Palo Alto Research Center,” was a blue-sky computer research lab started by Xerox to create the “Office of the Future.”

PARC’s staff, led by CHM Fellow Robert Taylor, was a who’s who of leading computer scientists of the day (among them CHM Fellows Chuck Thacker, Butler Lampson, Bob Metcalfe, Lynn Conway, Charles Geschke, and John Warnock. Among these luminaries was CHM Fellow Alan Kay. Kay envisioned the “Dynabook,” a tablet-like computer that would be a dynamic medium for learning. Thacker and Lampson designed, with the technology available at the time, an “interim” Dynabook which might partially make real Kay’s ideas. The result was the Alto, a personal workstation designed for a single user, running the world’s first graphical user interface (GUI) with windows, icons, and menus, controlled using a mouse. The Xerox Alto, which visitors can see in CHM’s Revolution exhibit, and much of whose source code CHM has released to the public, was the progenitor of the way almost all desktop computer users interact with their machines today.

Xerox Alto CHM# 102626737, 102656238, X1741.99C, X1741.99D, X124.82C

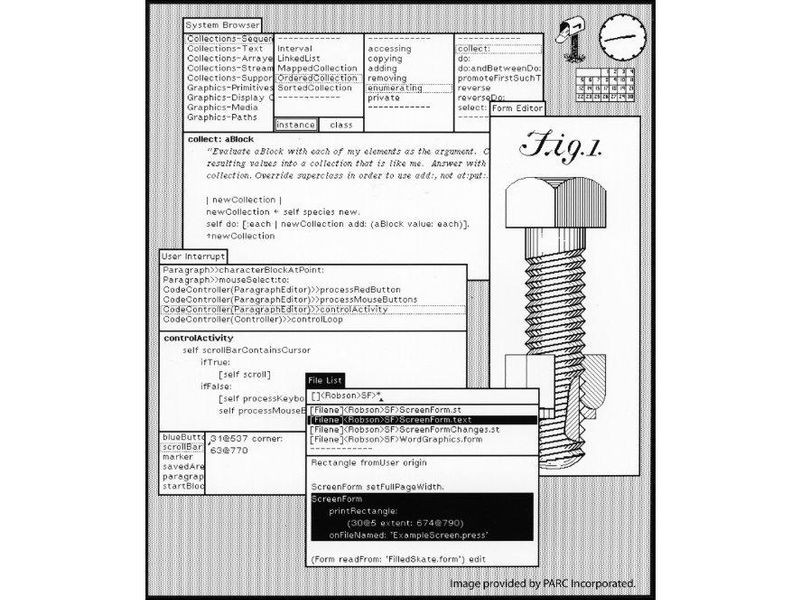

During the 1997 MacWorld demo, Jobs revealed that in 1979 he had actually missed a glimpse of two other PARC technologies that were critical to the future. One was pervasive networking between personal computers, which Xerox had with Ethernet, which it invented, in every one of its Alto workstations. The other was a new paradigm for programming, dubbed “object-oriented programming,” by Alan Kay. Kay, working with Dan Ingalls and Adele Goldberg, designed a new programming language and development environment that embodied this paradigm, running on an Alto. Kay called the system “Smalltalk” because he intended it to be simple enough for children to use. A program would consist of “objects” that modeled things in the real world, such as “Animal” or “Vehicle.” This differed from traditional “procedure-oriented” (or “procedural”) programming, where routines (“procedures”) operate on data inputs that are each stored separately. In Smalltalk, objects consisted of data grouped together with the routines (“methods”) that operated on that data. Kay imagined a program as a dynamic system of objects sending messages to each other. An object receiving a message would use it to select which of its many routines, or methods, to run. The same message sent to different objects would result in each receiving object executing its own routine, each different from the others. For example, a Dog object and a Cat object would respond to the “Speak” message differently; the Dog would run its “Bark” method while the Cat would run its “Meow” method.

Smalltalk’s development environment was graphical, with windows and menus. In fact, Smalltalk was the exact GUI that Steve Jobs saw in 1979. Smalltalk’s GUI was composed of just such a collection of interacting objects that we discussed. For example, a Window object could be sent the message “Draw,” which it would forward to all of the objects inside it, including Buttons and Sliders. Each of these objects would have its own particular method for drawing itself. During Jobs’ visit to PARC, he had been so enthralled by the surface details of the GUI that he completely missed the radical way it had been created with objects. The result was that programming graphical applications on the Macintosh would become much more difficult than doing so with Smalltalk. Said Jobs in his 1988 introduction of the NeXT Computer: “Macintosh was a revolution in making it easier for the end user. But the software developer paid the price… It is a bear to develop software… for the Macintosh… if you look at the time it takes to make [a GUI] application… the user interface takes 90% of the time.”

Smalltalk-80 graphical user interface (GUI) and development environment, circa 1980, courtesy PARC library

With the NeXT computer, Jobs planned to fix this exact shortcoming of the Macintosh. The PARC technologies missing from the Mac would become central features on the NeXT. NeXT computers, like other workstations, were designed to live in a permanently networked environment. Jobs called this “inter-personal computing,” though it was simply a renaming of what Xerox’s Thacker and Lampson called “personal distributed computing.” Likewise, dynamic object-oriented programming on the Smalltalk model provided the basis for all software development on NeXTSTEP. According to Jobs in 1988, NeXTSTEP would reduce the time for a developer to create an application’s user interface from 90% down to 10%. Instead of using Smalltalk, however, NeXT chose Objective-C as its programming language, which would provide the technical foundation for the success of NeXT and Apple’s software platforms for the next two decades and beyond. Objective-C remains in use at Apple today for iOS and OS X development, though in 2014 Apple introduced its new Swift language, which may one day replace it.

Objective-C was created in the 1980s by Brad Cox to add Smalltalk-style object-orientation to traditional, procedure-oriented C programs.1 It had a few significant advantages over Smalltalk. Programs written in Smalltalk could not stand alone. To run, Smalltalk programs had to be installed along with an entire Smalltalk runtime environment—a virtual machine, much like Java programs today. This meant that Smalltalk was very resource intensive, using significantly more memory, and running often slower, than comparable C programs that could run on their own. Also like Java, Smalltalk programs had their own user interface conventions, looking and feeling different than other applications on the native environment on which they were run. (Udell, 1990) By re-implementing Smalltalk’s ideas in C, Cox made it possible for Objective-C programmers to organize their program’s architecture using Smalltalk’s higher level abstractions while fine-tuning performance-critical code in procedural C, which meant that Objective-C programs could run just as fast as traditional C programs. Moreover, because they did not need to be installed alongside a Smalltalk virtual machine, their memory footprint was comparable to that of C programs, and, being fully native to the platform, would look and feel the same as all other applications on the system. (Cox, 1991) A further benefit was that Objective-C programs, being fully compatible with C, could utilize the hundreds of C libraries that had already been written for Unix and other platforms. This was particularly advantageous to NeXT, because NeXTSTEP, being based on Unix, could get a leg up on programs that could run on it. Developers could simply “wrap” an existing C code base with a new object-oriented GUI and have a fully functional application. Objective-C’s hybrid nature allowed NeXT programmers to have the best of both the Smalltalk and C worlds.

What value would this combination have for software developers? As early as the 1960s, computer professionals had been complaining of a “software crisis.” A widely distributed graph predicted that the costs of programming would eclipse the costs of hardware as software became ever more complex. (Slayton, 2013, pp. 155–157) Famously, IBM’s OS/360 project had shipped late, over-budget, and was horribly buggy. (Ensmenger, 2010, pp. 45–47, 205–206; Slayton, 2013, pp. 112–116) IBM produced a report claiming that the best programmers were anywhere up to twenty-five times more productive than the average programmer. (Ensmenger, 2010, p. 19) Programmers, frequently optimizing machine code with clever tricks to save memory or time, were said to be practitioners of a “black art” (Ensmenger, 2010, p. 40) and thus impossible to manage. Concern was so great that in 1968 NATO convened a conference of computer scientists at Garmisch, Switzerland to see if software programming could be turned into a discipline more like engineering. In the wake of the OS/360 debacle, CHM Fellow Fred Brooks, the IBM manager in charge of OS/360, wrote the seminal text in software engineering, The Mythical Man-Month. In it, Brooks famously outlined what became known as Brooks’ law—that after a software team reaches a certain size (and thus as the complexity of the software increases), adding more programmers will actually increase the cost and delay its release. Software, Brooks claimed, is best developed in small “surgical” teams led by a chief programmer, who is responsible for all architectural decisions, while subordinates do the implementation. (Brooks, 1995)

By the 1980s, the problems of cost and complexity in software remained unsolved. It appeared that the software industry might be in a perpetual state of crisis. In 1986, Brooks revisited his thesis and claimed that, despite modest gains from improved programming languages, there was no single technology, no “silver bullet” that could, by itself, increase programmer productivity by an order of magnitude—the 10x improvement that would elevate average programmers to the level of exceptional ones. (Brooks, 1987) Brad Cox begged to differ. Cox argued that object-oriented programming could be used to create libraries of software objects that developers could then buy off-the-shelf and then easily combine, like a Lego set, to create programs in a fraction of the time. Just as interchangeable parts had led to the original Industrial Revolution, a market for reusable, off-the-shelf software objects would lead to a Software Industrial Revolution. (Cox, 1990a, 1990b) Cox’s Objective-C language was a test bed for just such a vision, and Cox started a company, Stepstone, to sell libraries of objects to other developers.

Steve Jobs and his engineers at NeXT saw that Cox’s vision was largely compatible with their own, and licensed Objective-C from Stepstone. Stepstone and later NeXT engineer Steve Naroff did the heavy lifting to modify the language and compiler for NeXT’s needs.2 But rather than buy libraries from Stepstone, NeXT developed their own set of object libraries using Objective-C, and bundled these “Kits” with the NeXTSTEP operating system as part of its software development environment. The central graphical user interface library that all NeXT developers used to construct their applications was the ApplicationKit, or AppKit. In conjunction with AppKit, NeXT created a visual tool called “Interface Builder” that gave developers the ability to connect the objects in their programs graphically.

As part of his 1997 MacWorld presentation, Jobs demonstrated how easily one could build an app using Interface Builder, a process familiar to any iOS developer today. Jobs simply dragged a text field and a slider from a palette into a window, then dragged from the slider to the text field to make a “connection,” selecting a command for one object to send to the other. The result was that the text field was now hooked up to the slider, displaying in real-time the numerical value, between 1 and 100, which the slider’s position represented. Jobs demonstrated this all without writing a single line of code, driving home his point: “The line of code that the developer could write the fastest… maintain the cheapest… that never breaks for the user, is the line of code the developer never had to write.” Today, the AppKit is still the primary application framework on OS X, and iOS’s UIKit is heavily modeled on it. Interface Builder, too, still exists, as part of Apple’s Xcode Integrated Development Environment (IDE).

The combination of Objective-C, AppKit, and Interface Builder allowed Steve Jobs to boast that NeXTSTEP could make developers five to ten times more productive—precisely the order of magnitude improvement Brooks had claimed could not be achieved. Jobs assumed his audience at Macworld in 1997 was familiar with Brooks. “You’ve all read the Mythical Man-Month,” he told the audience. “As your software team is getting bigger, it sort of collapses under its own weight. Like a building built of wood, you can’t build a building built of wood that high.”

Using this metaphor of a building, Jobs brilliantly explained the comparative advantage of NeXTSTEP’s AppKit. Programming for DOS was the equivalent of starting at the ground floor, he argued, and an app developer might add three floors of functionality to achieve four floors of capability. The classic Mac OS, with its Toolbox APIs, effectively raised the foundation to the fifth floor, allowing a developer to reach eight floors of functionality. This, Jobs said, was what enabled the creation of killer applications like Pagemaker on the Mac (1985), which, in conjunction with laser printers, did much to create the entirely new market of desktop publishing. Jobs insisted that it was this capacity for developer innovation—to enable new kinds of applications on Apple’s platform that simply could not exist elsewhere—that Apple needed to foster if it was to survive and grow.

The problem, Jobs continued, was that Microsoft Windows had caught up to the Mac. Windows NT effectively provided a seventh floor base, outcompeting the Mac. Here is where Jobs saw NeXT coming to Apple’s rescue. NeXTSTEP, with its Interface Builder tool along with the AppKit and other object-oriented libraries, provided so much rich functionality out of the box that they raised the developer up to the twentieth floor, claimed Jobs. This meant that developers could eliminate 80% of the code that all graphical applications share in common, allowing them to focus on the 20% of the code that made their app unique and provided additional value to users. The result, Jobs insisted, would be that a small team of two to ten developers could write an app as fully featured as a hundred-person team working for a large corporate software company like Microsoft.

I argue that this vision outlined by Jobs is in fact remarkably similar to Fred Brooks’ notion of programming with small surgical teams led by a “chief” programmer. The differences is that rather than having the chief programmer delegate the grunt work to subordinate coders, as Brooks described, in Jobs’ vision, that work was handled by libraries of objects. In actuality, this was Cox’s vision too, except that whereas Cox intended for objects to be purchased on an open market, NeXT bundled its object libraries as part of the NeXTSTEP operating system and development environment, which might actually inhibit the formation of an after-market for objects. In the vision of both Cox and Jobs, the grunt work of making an application was offloaded to the developers of the objects; nobody in a small team needed to be a mere “implementer,” forced to work on the program’s “foundation.” Unlike procedural code units, it was precisely the black-boxed, encapsulated nature of objects—which prevented other programmers from tampering with their code—that enforced the modularity that allowed them to be reused interchangeably. Developers standing on any given floor simply were not allowed to mess with the foundation they stood on. Freed from worrying about the internal details of objects, developers could focus on the more creatively rewarding work of design and architecture that had been the purview of the “chief” programmer in Brook’s scheme. All team members would start at the twentieth floor and collaborate with each other as equals, and their efforts would continue to build upward, rather than be diverted to redoing the floors upon which they stood.

Was this promise of 5 to 10x improvement a pipe dream? We have already seen that NeXTSTEP had been used as a rapid prototyping tool to create the first version of the WorldWideWeb. Though NeXTSTEP had not found a large user base, it had been very well received by programmers, especially in academia. There was also a die-hard community of third-party NeXT developers backing Jobs up with their products. Small shops like OmniGroup, Lighthouse Design, and Stone Design, teams no larger than eighteen in the case of Lighthouse, and a single man in the case of Stone, had written fully featured applications such as spreadsheets, presentation software, web browsers, and graphic design tools. Moreover, NeXTSTEP had proved so productive for rapid development of “mission-critical” custom applications that Wall Street banks and national security organizations like the CIA were paying thousands of dollars per license for it.

Six months later, at a fireside chat at Apple’s May 1997 Worldwide Developer Conference, Jobs said that Lighthouse (which had since been acquired by Sun), proved that NeXTSTEP technology provided the five to ten times speed improvement for implementing existing apps. Moreover, the more compelling advantage was that NeXTSTEP would allow some innovative developer to create something entirely new, which could not have been developed on any other platform initially, and which could not be replicated on other platforms without huge effort. NeXTSTEP was what Tim Berners-Lee had used to create the WorldWideWeb, and what Dell used to create its first eCommerce website in 1996. This was how NeXTSTEP’s object-oriented development environment would power innovation on Apple platforms well into the twenty-first century.

Looking back from the perspective of 2016, Steve Jobs was remarkably prescient. After Mac OS X shipped on Macintosh personal computers, small-scale former NeXT developers and shareware Mac developers alike began to write apps using AppKit and Interface Builder, now called “Cocoa.” These developers, taking advantage of eCommerce over the Web, began to call themselves independent or ”indie” software developers, as opposed to the large corporate concerns like Microsoft and Adobe, with their hundred-man teams. In 2008, Apple opened up the iPhone to third-party software developers and created the App Store, enabling developers to sell and distribute their apps directly to consumers on their mobile devices, without having to set up their own servers or payment systems. The App Store became ground zero for a new gold rush in software development, inviting legendary venture capitalist firm Kleiner Perkins Caulfield & Byers to set up an “iFund” to fund mobile app startups. (Wortham, 2009) At the same time, indie Mac developers like Andrew Stone and Wil Shipley predicted that Cocoa Touch and the App Store would revolutionize the software industry around millions of small-scale developers.

Unfortunately, in the years since 2008, this utopian dream has slowly died: as unicorns, acquisitions, and big corporations moved in, the mobile market has matured, squeezing out the little guys who refuse investor funding. With hundreds of competitors in the App Store, it can be extremely difficult to get one’s app noticed without expensive external marketing. The reality is that a majority of mobile developers cannot sustain a living by making apps, and most profitable developers are contractors writing apps for large corporations. Nevertheless, the object-oriented technology Jobs demoed in 1997 is today the basis for every iPhone, iPad, Apple Watch and Apple TV app. Did Steve Jobs predict the future? Alan Kay famously said, “The best way to predict the future is to invent it.” Cyberpunk author William Gibson noted, “The future is already here—it’s just not evenly distributed.” NeXT had already invented the future back in 1988, but because NeXT never shipped more than 50,000 computers, only a handful were lucky enough to glimpse it in the 1990s. Steve Jobs needed to return to Apple to distribute that future to the rest of the world.

In today’s Silicon Valley, with its focus on innovation and the future, the deep histories of such technologies as Apple’s Cocoa development environments are often forgotten. However, understanding the past is vitally important for inventing the future, for chances are, the future has already been invented, one just needs to do a little digging. At the Computer History Museum, our mission is to preserve this past, not just the physical hardware (we have a number of NeXT computers and peripherals), but also the software that is the soul of these machines. CHM has a small collection of NeXT software, including NeXTSTEP 3.3, OpenStep 4.2, Enterprise Object Frameworks 1.1 and WebObjects 3.0 on CD, but we are lacking earlier versions of NeXTSTEP, we have little in the way of NeXT applications. Filling out the collection is important because software is the contextual link between computers, users, and the institutions and society they are embedded in. “Software is history, organization, and social relationships made tangible,” as computing historian Nathan Ensmenger has written. (Ensmenger, 2010, p. 227) Thus, beyond preservation, it is also vital to make meaningful the stories of computer software’s legacy and contextualize it in the culture of its time. This is my first blog post as CHM’s Curator for its new Software History Center, and it marks the beginning of my project to collect and interpret materials, software, and oral histories related to graphical user interfaces, object-oriented programming, and software engineering, starting with a focus on NeXT, Apple, and Xerox PARC. Look forward for more stories like this in this space, and if you are a former NeXT, Apple, or Xerox PARC engineer, and would like to contribute to this project, please contact me at hhsu@computerhistory.org!