Cover of CORE 2015. From: Computer History Museum

Moore’s “Law” is not a law of nature or science but an observation by Gordon E. Moore, Director of the Fairchild Semiconductor Research and Development Laboratories in Palo Alto, CA in 1965 that evolved over the years and emerged as one of the most familiar maxims in techdom. As a state of mind, it has served as a driving principle of the semiconductor electronics industry over the last 50 years.

On April 19, 1965, Electronics magazine published an article by Moore that projected the growth in the complexity of integrated circuits (popularly called ICs, microchips, or computer chips) over the next ten years. Written earlier as an internal document titled “The Future of Integrated Electronics” to encourage his company’s customers to adopt the most advanced technology in their new computer designs, his prediction emerged as a self-fulfilling prophecy that guided the actions and goals of industry executives worldwide. Venture capitalist Steve Jurvetson has described a figure illustrating the article as “the most important graph in human history.” [1]

In CORE 2015, the journal of the Computer History Museum, experts in the computing field explore the history, legacy, social impact, and future of the “Law.” This blog offers a summary of how it has evolved since 1965.

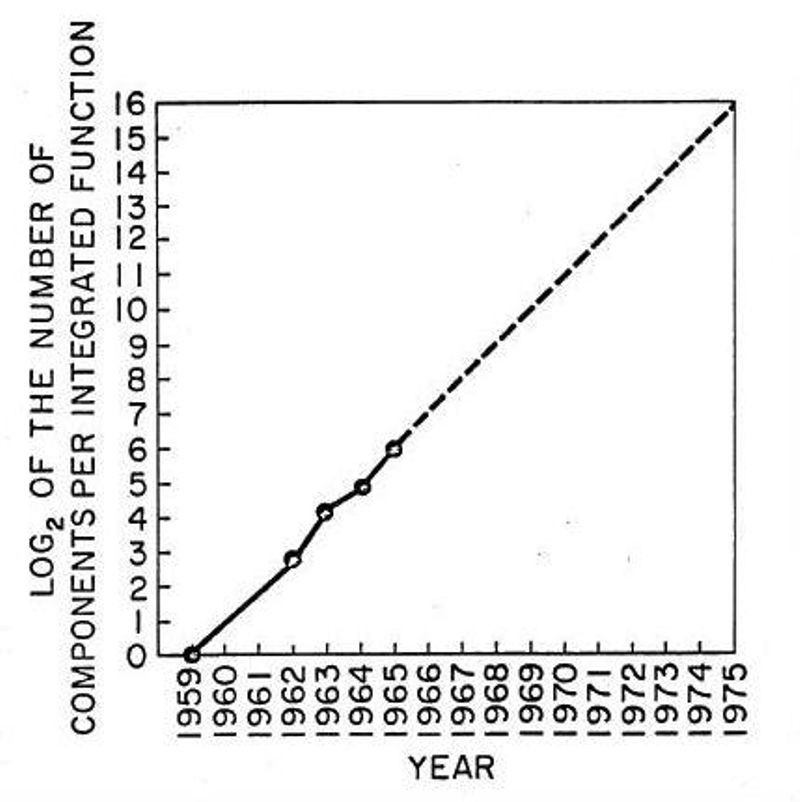

Graph printed in 1965 Electronics magazine From: Fairchild internal document

Under the title “Cramming more components onto integrated circuits,” Moore predicted “the development of integrated electronics for perhaps the next ten years.” [2] He plotted a graph of the maximum number of components that Fairchild technologists had been able to squeeze onto a silicon computer chip at minimum cost per component since the development of the company’s groundbreaking planar manufacturing process in 1959 until 1965. Drawing a line through just five data points he projected that “with unit cost falling as the number of components per circuit rises, by 1975 economics may dictate squeezing as many as 65,000 components on a singe silicon chip.” This represented a doubling every 12 months.

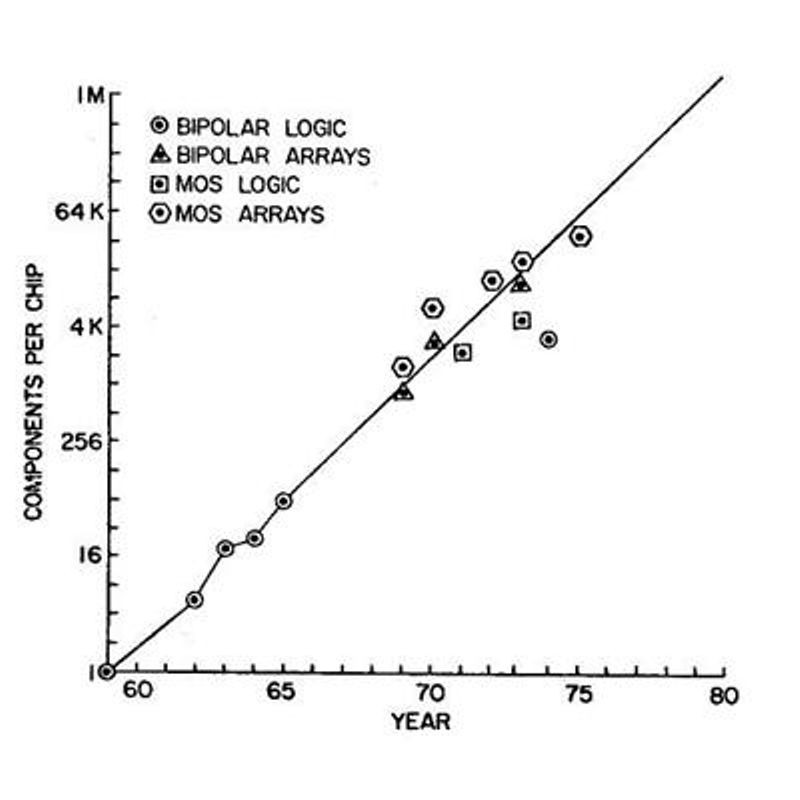

Actual component count in 1975 compared to 1965 prediction. From: IEEE, IEDM Tech Digest (1975)

At the 1975 IEEE International Electron Devices Meeting, Moore, by now co-founder responsible for R&D at Intel Corporation, noted that advances in photolithography, wafer size, process technology, and “circuit and device cleverness,” had allowed his projection to be realized. On adding subsequent products to his original handful of simple logic ICs, notably important new devices such as microprocessors and memories, Moore modified the trend and reduced his estimate of the future rate of increase in complexity to “a doubling every two years, rather than every year.” [3]

After Caltech electrical engineering professor Carver Mead dubbed this projection “Moore’s Law,’” industry technologists and managers world-wide were challenged with delivering annual breakthroughs in optics, materials science, methods of wafer processing, circuit design techniques, manufacturing and test equipment, and management of complex industrial operations to ensure compliance with its projections. On reviewing the status of the industry again in 1995 (at which time an Intel Pentium microprocessor held nearly 5 million transistors) Moore concluded that “The current prediction is that this is not going to stop soon.” [4]

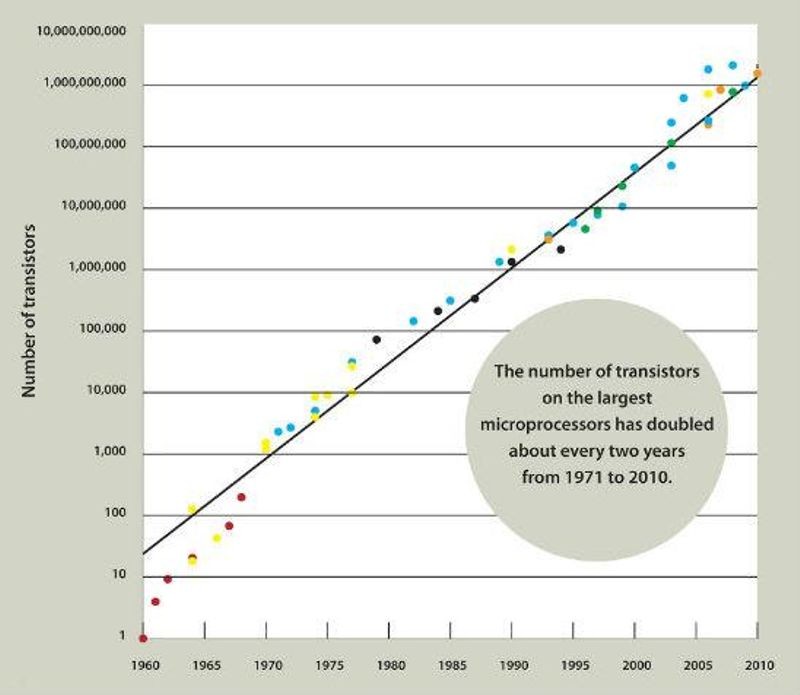

Transistor count on largest microprocessors 1975-2010. From: Computer History Museum Revolution exhibit

Former Intel CEO Craig Barrett remarked in 2002 that “We don’t adhere to Moore’s Law for the hell of it. It’s a fundamental expectation that everybody at Intel buys into… We simply don’t accept the growing complexity of the challenge as an excuse not to keep it going.” [5] AMD and Intel microprocessors continued on that track through 2010 when products from both companies held more than one billion transistors. A recent chip from graphics company Nvidia holds over nine billion.

Industry pundits have long noted that the physical characteristics of the atomic structure of ICs and the cost of the equipment and facilities required to fabricate them herald the eventual demise of continued advances at the same rate. Moore himself commented in 2003 that “No exponential change continues forever.” [6] A recent survey noted that only “one fourth of semiconductor business leaders believe Moore’s Law will continue for the foreseeable future.” Some claim that it has already ended. [7] However, as Intel senior fellow Mark Bohr recently revealed [8] plans for moving from today’s chips that have a minimum feature size of 14 nanometers (nm) down to 7 nm (a strand of human DNA is 2.5 nm in diameter, a carbon atom is 0.3 nm), it appears that Moore’s Law will prevail at least through the end of this decade.

Whatever the future may hold, in its relentless drive to adhere to the tenets of Moore’s Law the competitive nature of the semiconductor industry has made the transistor fabricated on a microchip the most frequently manufactured human artifact in history. According to David Brock of the Chemical Heritage Foundation, “Estimates of the number of transistors produced in a single year now match, or exceed, estimates of the total number of all the grains of sand on all the world’s beaches. With computing devices made of microchips, the price of computing has fallen over a million-fold, while the cost of electronics has fallen a billion-fold.” [9]

Moore’s Law: The Life of Gordon Moore, Silicon Valley’s Quiet Revolutionary claims that the digital revolution forged by this wealth of low-cost computing power has “introduced a dazzling menagerie of transformations and possibilities. … As the average adult today spends around half of waking time immersed in electronic interactions, does this alter us fundamentally? With the silicon transistor impinging upon every facet of our material existence, how are we being shaped in expectation and action? Moore’s Law has been a singularly important enabler of our lives, changing the meaning of being human.” [10]

In 2012 Texas Instruments’ donated five of Gordon Moore’s Fairchild patent notebooks to the Computer History Museum collection. Spanning the period 1957 through 1968, they are hand-numbered 1 through 6. Number 5, that would have covered the years from 1963 to 1965 when he was gathering data for the Electronics article, is missing. We would appreciate any information as to its current location.